目录

Prometheus 是开源的、基于指标 (metrics) 的、在云原生环境下常用的监控系统。本文将演示如何自动化部署、配置 Prometheus,并对主机进行监控。

本文包含以下主题:

- Prometheus 介绍

- 部署环境介绍

- 下载软件包

- 部署 Prometheus 组件

- 查看监控

- 触发报警

- 总结

1.1 Prometheus 介绍

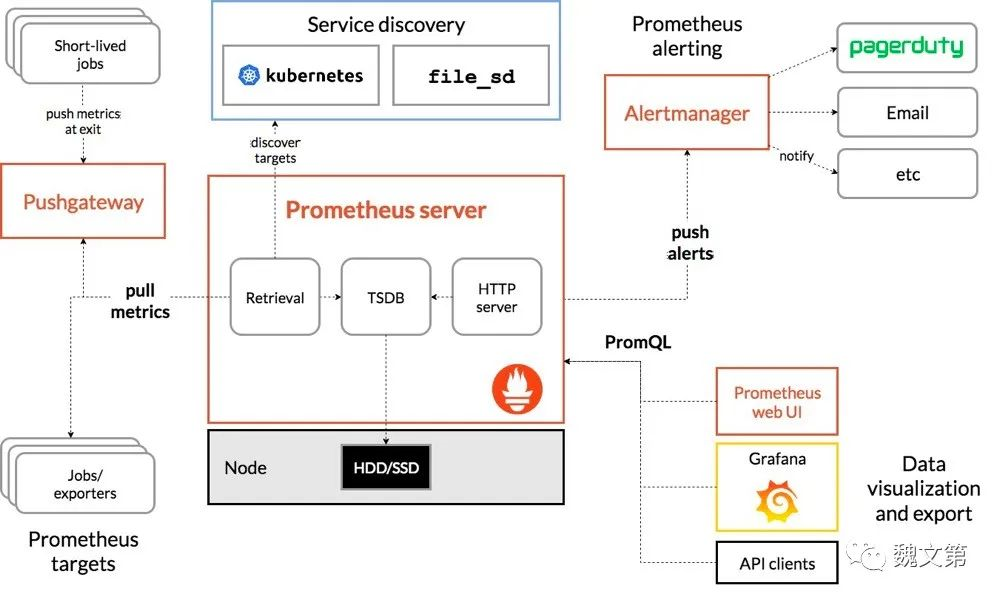

图 1.1 展示了 Prometheus 的架构和生态系统组件:

图 1.1 Prometheus 架构图 (图片来自 Prometheus 官网)

图 1.1 Prometheus 架构图 (图片来自 Prometheus 官网)

Prometheus 通过服务发现获取目标,周期性地、以时间序列的形式收集和存储目标通过 HTTP 暴露出来的指标,将指标信息与时间戳以及标签 (labels) 键值对一起存储。存储后的指标可以通过 PromQL 在浏览器中展示、或发送告警信息给 Alertmanager,Alertmanager 根据配置的报警方法发送报警。

在本示例中,部署的组件包括:Prometheus、Node Exporter、Alertmanager 和 Grafana。

1.2 部署环境介绍

虽然需要部署四个应用,但两个节点就足够了:一个节点作为 Ansible 控制节点,同时也运行 Node Exporter;另一个节点部署 Prometheus、Node Exporter、Alertmanager 和 Grafana。

1.2.1 节点信息

两个节点均为 2 核 CPU、2 GB 内存的虚拟机。

Ansible 节点信息:

# IP:10.211.55.18 # 主机名:automate-host.aiops.red # 系统版本:Rocky Linux release 9.1

Ansible Inventory hosts:

[automate] automate-host.aiops.red [prometheus] prometheus.server.aiops.red

Prometheus 节点信息:

# IP:10.211.55.30 # 主机名:prometheus.server.aiops.red # 系统版本:Rocky Linux release 9.1

1.2.2 节点要求

为使安装过程顺利进行,节点应满足以下要求。

1.2.2.1 时钟同步

Prometheus 是一个时序数据库,依赖于准确的时间,时间漂移可能会导致意想不到的查询结果。因此各节点时钟保持同步。

要自动化实现时钟同步,可以参考 “Linux 9 自动化部署 NTP 服务)” 一文。

1.2.2.2 主机名解析

Ansible 控制节点能够解析 Prometheus 节点的主机名,通过主机名访问 Prometheus 节点。

要实现主机名称解析,可以在 Ansible 控制节点的 /etc/hosts 文件中指定 Prometheus 节点的 IP、主机名条目,或者参考 “Linux 9 自动化部署 DNS 服务 一文配置 DNS 服务。

1.2.2.3 账号权限

Ansible 控制节点可以免密登录 Prometheus 节点,并能够免密执行 sudo。可以参考 “Linux 9 自动化部署 NTP 服务)” 中的 “部署环境要求” 一节实现。

在满足了以上要求后,开始 Prometheus 监控系统的部署。

1.3 下载软件包

Linux 的 dnf 仓库没有提供 Prometheus 相关组件的安装包,因此需要创建一个独立的 Role 下载所需软件包。

1.3.1 创建下载 Role

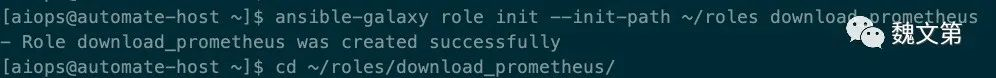

在 Ansible 节点上创建 download_prometheus Role,并切换到 ~/roles/download_prometheus/ 目录,命令如下:

ansible-galaxy role init --init-path ~/roles download_prometheus cd ~/roles/download_prometheus/

图 1.2 创建 download_prometheus Role

图 1.2 创建 download_prometheus Role

download_prometheus Role 用来下载安装 Prometheus 组件所需的软件包。定义两个列表类型的变量:package_url 和 unarchive_path。package_url 的值是一组下载地址;unarchive_path 的值是一组目录,软件包解压后存储的位置。编辑 defaults/main.yml 文件,定义这两个变量,内容如下:

--- # defaults file for download_prometheus package_url: - https://dl.grafana.com/enterprise/release/grafana-enterprise-9.3.1.linux-arm64.tar.gz - https://github.com/prometheus/alertmanager/releases/download/v0.24.0/alertmanager-0.24.0.linux-arm64.tar.gz - https://github.com/prometheus/node_exporter/releases/download/v1.5.0/node_exporter-1.5.0.linux-arm64.tar.gz - https://github.com/prometheus/prometheus/releases/download/v2.40.5/prometheus-2.40.5.linux-arm64.tar.gz unarchive_path: - ~/software/monitor_tools/grafana - ~/software/monitor_tools/alertmanager - ~/software/monitor_tools/node_exporter - ~/software/monitor_tools/prometheus

Grafana 下载地址:https://grafana.com/grafana/download

Prometheus 组件下载地址:https://prometheus.io/download/

该示例是在 ARM 架构的主机上完成的,可以在页面上查找适合自己操作系统的软件包,然后覆盖变量的值。

接下来,编辑 tasks/main.yml 文件,定义下载软件包的任务。文件内容如下:

--- # tasks file for download_prometheus - name: create download and unarchive directory task ansible.builtin.file: path: "{{ item }}" state: directory with_items: "{{ unarchive_path }}" - name: download and unarchive tarball task ansible.builtin.unarchive: src: "{{ item.0 }}" dest: "{{ item.1 }}" remote_src: true extra_opts: - --strip-components=1 with_together: - "{{ package_url }}" - "{{ unarchive_path }}"

在 tasks/main.yml 中,定义了两个任务:

- 任务一:使用 ansible.builtin.file 模块创建

unarchive_path变量定义的目录。 - 任务二:使用 ansible.builtin.unarchive 模块将远程文件 (

package_url变量定义的软件包) 解压到指定目录 (unarchive_path变量定义的目录)。

这就是下载软件包的 Role。接下来创建 Playbook,执行该 Role,下载软件包。

1.3.2 创建下载 Playbook

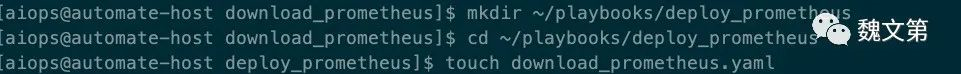

将下载软件包的 Playbook 与部署软件包的 Playbook 放在同一个目录下。创建 ~/playbooks/deploy_prometheus 目录,并在该目录下创建 download_prometheus.yaml Playbook 文件。

mkdir ~/playbooks/deploy_prometheus cd ~/playbooks/deploy_prometheus vim download_prometheus.yaml

图 1.3 创建下载软件包的 Playbook

图 1.3 创建下载软件包的 Playbook

download_prometheus.yaml 文件内容如下:

--- - name: download prometheus packages play hosts: localhost become: false gather_facts: false roles: - role: download_prometheus tags: download_prometheus ...

定义了一个 Play,在 localhost 主机 (Ansible 控制节点) 上执行 download_prometheus Role,所有软件包将解压到 Ansible 控制节点的 ~/software/monitor_tools/ 目录下。

1.3.3 下载软件包

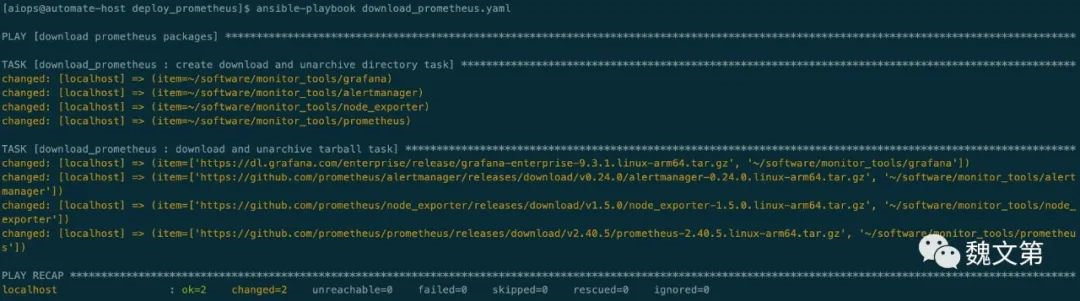

执行 download_prometheus.yaml Playbook,下载软件包:

ansible-playbook download_prometheus.yaml

图 1.4 下载软件包

图 1.4 下载软件包

Prometheus 软件包是在 GitHub 官网下载的,因此执行此 Playbook 可能返回超时错误,可尝试多次执行或者寻找其他下载源。

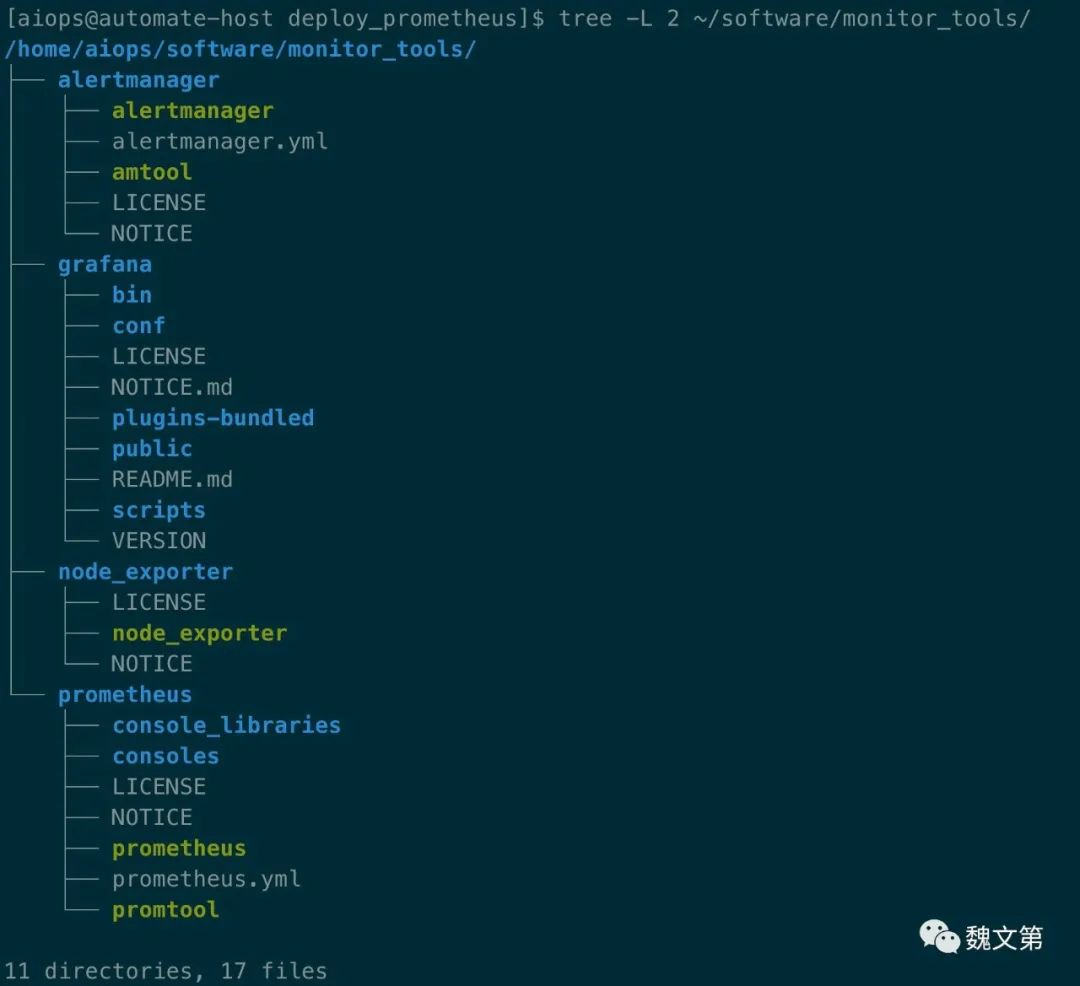

命令执行成功后,通过 tree 命令查看:

tree -L 2 ~/software/monitor_tools/

图 1.5 下载后的目录结构

图 1.5 下载后的目录结构

下载包的过程成功执行一次即可,在以后的部署过程中,无需重复执行。

1.4 部署 Prometheus 组件

安装包已经准备好了,接下来进行部署。本示例演示 prometheus、grafana、node_exporter 和 alertmanager 的安装与配置,分别创建部署它们的 Role。

1.4.1 创建部署 Prometheus 的 Role

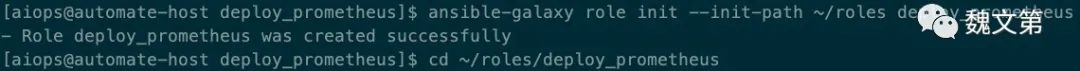

首先安装 Prometheus,它是监控组件的核心服务,提供监控系统和时序数据库。创建 deploy_prometheus Role:

ansible-galaxy role init --init-path ~/roles deploy_prometheus cd ~/roles/deploy_prometheus

图 1.6 创建部署 Prometheus 组件的 Role

图 1.6 创建部署 Prometheus 组件的 Role

在 tasks/main.yaml 文件中,定义部署 prometheus 的任务,内容如下:

--- # tasks file for deploy_prometheus - name: "create {{ user_name }} user task" ansible.builtin.user: name: "{{ user_name }}" home: "{{ home_dir }}" shell: /sbin/nologin - name: copy the installation file task ansible.builtin.copy: src: "{{ unarchive_path.3 }}" dest: "{{ home_dir }}" owner: "{{ user_name }}" group: "{{ user_name }}" mode: 0755 - name: generate systemd unit files task ansible.builtin.template: src: prometheus.service.j2 dest: /usr/lib/systemd/system/prometheus.service mode: 0644 notify: restart prometheus.service handler - name: generate configuration files task ansible.builtin.template: src: prometheus.yml.j2 dest: "{{ home_dir }}/prometheus/prometheus.yaml" owner: "{{ user_name }}" group: "{{ user_name }}" mode: 0644 notify: restart prometheus.service handler - name: started prometheus service task ansible.builtin.systemd: name: prometheus.service state: started enabled: true daemon_reload: true - name: turn on prometheus ports in the firewalld task ansible.builtin.firewalld: port: "{{ prometheus_port }}/tcp" permanent: true immediate: true state: enabled

在任务文件中,定义了六个任务:

- 任务一:创建管理 Prometheus 组件服务的用户,并为其指定主目录。用户名使用

user_name变量定义,用户主目录通过home_dir变量定义。 - 任务二:复制二进制等文件到安装路径。

unarchive_path.3表示该列表变量中的第四个元素,元素数从 0 开始。 - 任务三:通过模板文件,为 Prometheus 服务生成 systemd 单元文件。

- 任务四:通过目标文件,为 Prometheus 服务生成配置文件。

- 任务五:加载单元文件,启动 Prometheus 服务,并设置为开机启动。

- 任务六:为 Prometheus 服务监听的端口开启防火墙;端口通过

prometheus_port变量定义。

在任务三、四有变化时,通知了 “restart prometheus.service handler” Handlers。handlers/main.yml 文件的内容如下:

--- # handlers file for deploy_prometheus - name: restart prometheus.service handler ansible.builtin.systemd: name: prometheus.service state: restarted daemon_reload: true

用来重启 Prometheus 服务。

两个模板文件的内容如下:

templates/prometheus.service.j2:

[Unit] Description=Prometheus Wants=network.target After=network.target [Service] User={{ user_name }} Group={{ user_name }} Type=simple ExecStart={{ home_dir }}/prometheus/prometheus \ --web.listen-address="0.0.0.0:{{ prometheus_port }}" \ --config.file {{ home_dir }}/prometheus/prometheus.yaml \ --storage.tsdb.path {{ home_dir }}/prometheus/ \ --web.console.templates={{ home_dir }}/prometheus/consoles \ --web.console.libraries={{ home_dir }}/prometheus/console_libraries Restart=on-failure [Install] WantedBy=multi-user.target

templates/prometheus.yml.j2:

# my global config global: scrape_interval: 15s evaluation_interval: 15s # set alert rules rule_files: - rules.yml alerting: alertmanagers: - static_configs: - targets: - {{ alertmanager_host }}:{{ alertmanager_port }} # scrape exporters scrape_configs: - job_name: "node" static_configs: - targets: {% for host in groups["all"] %} - "{{ host }}:{{ node_exporter_port }}" {% endfor %}

set alert rules 部分的内容,在部署 Alertmanager 时讨论。

scrape exporters 部分,通过 for 循环,自动添加 node exporter 为目标。

本角色及其他角色中使用的变量,将在 Playbooks 中添加。

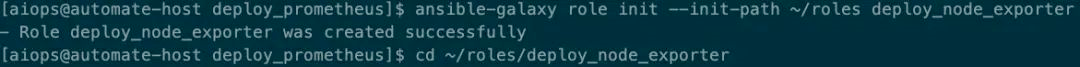

1.4.2 创建部署 Node Exporter 的 Role

Node Exporter 采集节点信息,并通过 HTTP 暴露指标,Prometheus Server 收集这些指标,作为监控数据。

创建 deploy_node_exporter Role:

ansible-galaxy role init --init-path ~/roles deploy_node_exporter cd ~/roles/deploy_node_exporter

图 1.7 创建部署 Node Exporter 的 Role

图 1.7 创建部署 Node Exporter 的 Role

在 tasks/main.yaml 文件中,定义部署 node exporter 的任务,内容如下:

--- # tasks file for deploy_node_exporter - name: "create {{ user_name }} user task" ansible.builtin.user: name: "{{ user_name }}" home: "{{ home_dir }}" shell: /sbin/nologin - name: copy the installation file task ansible.builtin.copy: src: "{{ unarchive_path.2 }}" dest: "{{ home_dir }}" owner: "{{ user_name }}" group: "{{ user_name }}" mode: 0755 - name: generate systemd unit files task ansible.builtin.template: src: node-exporter.service.j2 dest: /usr/lib/systemd/system/node-exporter.service mode: 0644 - name: started node exporter service task ansible.builtin.systemd: name: node-exporter.service state: started enabled: true daemon_reload: true - name: turn on node exporter port in the firewalld task ansible.builtin.firewalld: port: "{{ node_exporter_port }}/tcp" permanent: true immediate: true state: enabled

在任务文件中,包含了五个任务:

- 任务一:创建启动监控组件的用户。

- 任务二:拷贝部署文件到安装目录。

- 任务三:从模板文件生成单元文件。

- 任务四:启动 node exporter 服务。

- 任务五:为 node exporter 服务监听的端口开启防火墙。

templates/node-exporter.service.j2 模板文件内容:

[Unit] Description=Node Exporter After=network.target [Service] User={{ user_name }} Group={{ user_name }} Type=simple ExecStart={{ home_dir }}/node_exporter/node_exporter \ --web.listen-address=:{{ node_exporter_port }} \ --collector.systemd \ --collector.processes Restart=on-failure [Install] WantedBy=multi-user.target

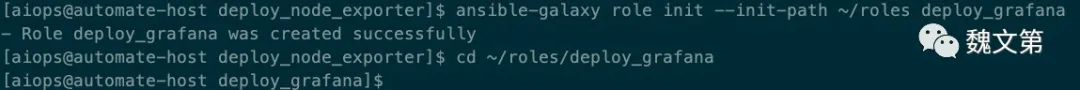

1.4.3 创建部署 Grafana 的 Role

Grafana 用来展示 Prometheus 中的数据。创建 deploy_grafana Role:

ansible-galaxy role init --init-path ~/roles deploy_grafana cd ~/roles/deploy_grafana

图 1.8 创建部署 Grafana 的 Role

图 1.8 创建部署 Grafana 的 Role

在 tasks/main.yaml 文件中,定义部署 grafana 的任务,内容如下:

--- # tasks file for deploy_grafana - name: "create {{ user_name }} user task" ansible.builtin.user: name: "{{ user_name }}" home: "{{ home_dir }}" shell: /sbin/nologin - name: copy the installation file task ansible.posix.synchronize: src: "{{ unarchive_path.0 }}" dest: "{{ home_dir }}" - name: set the file owner task ansible.builtin.shell: "chown {{ user_name }}.{{ user_name }} {{ home_dir }} -R" - name: create configuration directorys task ansible.builtin.file: path: "{{ item }}" state: directory mode: 0755 with_items: - /etc/grafana/provisioning/datasources - /etc/grafana/provisioning/dashboards - /etc/grafana/provisioning/plugins - /etc/grafana/provisioning/notifiers - /etc/grafana/provisioning/alerting - "{{ grafana_dashboards_path }}" - name: create grafana data and logs directories task ansible.builtin.file: path: "{{ item }}" state: directory owner: "{{ user_name }}" group: "{{ user_name }}" mode: 0755 with_items: - "{{ home_dir }}/data/grafana" - /var/log/grafana - name: copy prifile file task ansible.builtin.copy: src: grafana.ini dest: /etc/grafana - name: copy datasource config file task ansible.builtin.template: src: datasource.yaml.j2 dest: /etc/grafana/provisioning/datasources/datasource.yaml - name: copy dashboard config file task ansible.builtin.template: src: dashboards.yaml.j2 dest: /etc/grafana/provisioning/dashboards/dashboards.yaml - name: downlaod dashboard json file task ansible.builtin.get_url: src: https://grafana.com/api/dashboards/1860/revisions/29/download dest: "{{ grafana_dashboards_path }}/node-exporter-full.json" - name: copy environment file task ansible.builtin.template: src: grafana-server.j2 dest: /etc/sysconfig/grafana-server - name: generate systemd unit files task ansible.builtin.template: src: grafana.service.j2 dest: /usr/lib/systemd/system/grafana.service mode: 0644 - name: started grafana service task ansible.builtin.systemd: name: grafana.service state: started enabled: true daemon_reload: true - name: turn on the firewall of grafana service task ansible.builtin.firewalld: port: 3000/tcp permanent: true immediate: true state: enabled

本部分包含十三个任务:

- 任务一:创建用户及主目录。

- 任务二:使用 synchronize 模块拷贝文件,它封装了 rsync。

- 任务三:修改安装文件的属主与属组。

- 任务四:创建 grafana 配置文件目录、provisioning 目录,以及 dashboard 目录。

- 任务五:创建日志目录及数据目录。

- 任务六:复制配置文件到配置目录。

- 任务七:拷贝数据源配置文件到指定目录,自动配置 datasource。

- 任务八:拷贝 dashboard 配置文件到指定目录。

- 任务九:下载 dashboard 文件到指定目录,自动配置图形化展示。

- 任务十:复制环境变量文件。

- 任务十一:生成单元文件。

- 任务十二:启动 grafana 服务。

- 任务十三:开启防火墙。

files/grafana.ini 配置文件是安装目录中的 conf/sample.ini 文件,因此它的内容不再展示。

templates/datasource.yaml.j2 模板文件内容如下:

# config file version apiVersion: 1 # list of datasources that should be deleted from the database deleteDatasources: - name: Prometheus orgId: 1 # list of datasources to insert/update depending # whats available in the database datasources: # <string, required> name of the datasource. Required - name: Prometheus # <string, required> datasource type. Required type: prometheus # <string, required> access mode. direct or proxy. Required access: proxy # <int> org id. will default to orgId 1 if not specified orgId: 1 # <string> url url: http://{{ prometheus_host }}:{{ prometheus_port }}

templates/dashboards.yaml.j2 模板文件内容如下:

apiVersion: 1 providers: # <string> an unique provider name. Required - name: 'default' # <int> Org id. Default to 1 orgId: 1 # <string> name of the dashboard folder. folder: '' # <string> folder UID. will be automatically generated if not specified folderUid: '' # <string> provider type. Default to 'file' type: file # <bool> disable dashboard deletion disableDeletion: false # <int> how often Grafana will scan for changed dashboards updateIntervalSeconds: 100 # <bool> allow updating provisioned dashboards from the UI allowUiUpdates: false options: # <string, required> path to dashboard files on disk. Required when using the 'file' type path: {{ grafana_dashboards_path }} # <bool> use folder names from filesystem to create folders in Grafana foldersFromFilesStructure: true

templates/grafana-server.j2 模板文件内容如下:

GRAFANA_USER={{ user_name }} GRAFANA_GROUP={{ user_name }} GRAFANA_HOME={{ home_dir }}/grafana LOG_DIR=/var/log/grafana DATA_DIR={{ home_dir }}/data/grafana MAX_OPEN_FILES=10000 CONF_DIR=/etc/grafana CONF_FILE=/etc/grafana/grafana.ini RESTART_ON_UPGRADE=true PLUGINS_DIR={{ home_dir }}/grafana/plugins PROVISIONING_CFG_DIR=/etc/grafana/provisioning # Only used on systemd systems PID_FILE_DIR=/var/run/grafana

templates/grafana.service.j2 模板文件内容如下:

[Unit] Description=Grafana instance Documentation=http://docs.grafana.org Wants=network-online.target After=network-online.target [Service] EnvironmentFile=/etc/sysconfig/grafana-server User=monitor Group=monitor Type=notify Restart=on-failure WorkingDirectory={{ home_dir }}/grafana RuntimeDirectory=grafana RuntimeDirectoryMode=0750 ExecStart={{ home_dir }}/grafana/bin/grafana-server \ --config=${CONF_FILE} \ --pidfile=${PID_FILE_DIR}/grafana-server.pid \ --packaging=tar.gz \ cfg:default.paths.logs=${LOG_DIR} \ cfg:default.paths.data=${DATA_DIR} \ cfg:default.paths.plugins=${PLUGINS_DIR} \ cfg:default.paths.provisioning=${PROVISIONING_CFG_DIR} LimitNOFILE=10000 TimeoutStopSec=20 CapabilityBoundingSet= DeviceAllow= LockPersonality=true MemoryDenyWriteExecute=false NoNewPrivileges=true PrivateDevices=true PrivateTmp=true ProtectClock=true ProtectControlGroups=true ProtectHome=true ProtectHostname=true ProtectKernelLogs=true ProtectKernelModules=true ProtectKernelTunables=true ProtectProc=invisible ProtectSystem=full RemoveIPC=true RestrictAddressFamilies=AF_INET AF_INET6 AF_UNIX RestrictNamespaces=true RestrictRealtime=true RestrictSUIDSGID=true SystemCallArchitectures=native UMask=0027 [Install] WantedBy=multi-user.target

1.4.4 创建部署 Alertmanager 的 Role

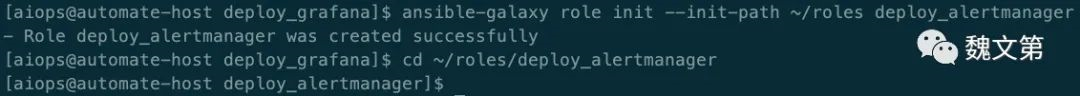

Alertmanager 用来发送报警。创建 deploy_alertmanager Role:

ansible-galaxy role init --init-path ~/roles deploy_alertmanager cd ~/roles/deploy_alertmanager

图 1.9 创建部署 Alertmanager 的 Role

图 1.9 创建部署 Alertmanager 的 Role

在 tasks/main.yaml 文件中,定义部署 Alertmanager 的任务,内容如下:

--- # tasks file for deploy_alertmanager - name: "create {{ user_name }} user task" ansible.builtin.user: name: "{{ user_name }}" home: "{{ home_dir }}" shell: /sbin/nologin - name: create configuation directory task ansible.builtin.file: path: /etc/alertmanager state: directory mode: 0755 - name: create data directory task ansible.builtin.file: path: "{{ home_dir }}/data/alertmanager" state: directory owner: "{{ user_name }}" group: "{{ user_name }}" mode: 0755 - name: copy the installation file task ansible.builtin.copy: src: "{{ unarchive_path.1 }}" dest: "{{ home_dir }}" owner: "{{ user_name }}" group: "{{ user_name }}" mode: 0755 - name: generate systemd unit files task ansible.builtin.template: src: alertmanager.service.j2 dest: /usr/lib/systemd/system/alertmanager.service mode: 0644 - name: create alert rules file task ansible.builtin.file: path: "{{ home_dir }}/prometheus/rules.yml" state: touch - name: insert the rule to the rule file task ansible.builtin.blockinfile: path: "{{ home_dir }}/prometheus/rules.yml" block: | groups: - name: NodeAlert rules: - alert: InstanceDown expr: up == 0 for: 1m notify: restart prometheus.service handler - name: generate configuration files task ansible.builtin.template: src: alertmanager.yml.j2 dest: "/etc/alertmanager/alertmanager.yml" owner: "{{ user_name }}" group: "{{ user_name }}" mode: 0644 notify: restart alertmanager.service handler - name: started alertmanager service task ansible.builtin.systemd: name: alertmanager.service state: started enabled: true daemon_reload: true - name: turn on alertmanager ports in the firewalld task ansible.builtin.firewalld: port: "{{ alertmanager_port }}/tcp" permanent: true immediate: true state: enabled

此处执行了十个任务:

- 任务一:创建用户。

- 任务二:为 Alertmanager 创建配置文件目录。

- 任务三:为 Alertmanager 创建数据文件目录。

- 任务四:复制二进制等文件。

- 任务五:通过模板文件生成单元文件。

- 任务六:创建定义报警规则的文件。

- 任务七:将规则内容插入到规则文件中。

- 任务八:生成 Alertmanager 配置文件。

- 任务九:启动 Alertmanager 服务。

- 任务十:为

alertmanager_port开启防火墙。

任务七、八引用了 Handlers,handlers/main.yml 内容如下:

--- # handlers file for deploy_alertmanager - name: restart alertmanager.service handler ansible.builtin.systemd: name: alertmanager.service state: restarted - name: restart prometheus.service handler ansible.builtin.systemd: name: prometheus.service state: restarted

告警分为两部分:在 Prometheus 中添加告警规则,定义告警产生的逻辑;Alertmanager 将触发的告警转换为通知 (邮件或其他方式)。

在 Prometheus 的模板配置文件 prometheus.yml.j2 中增加以下内容:

rule_files: - rules.yml alerting: alertmanagers: - static_configs: - targets: - {{ alertmanager_host }}:{{ alertmanager_port }}

在 templates/ 目录下,编辑 Alertmanager 的配置文件alertmanager.yml.j2,配置通过邮件报警。内容大致如下,需要修改为真实信息:

global: smtp_smarthost: 'smtp.exmail.qq.com' smtp_from: 'example@aiops.red' smtp_auth_username: 'example@aiops.red' smtp_auth_password: 'password' smtp_require_tls: true resolve_timeout: 10s route: receiver: notify-email receivers: - name: notify-email email_configs: - to: "ops@aiops.red"

templates/alertmanager.service.j2 模板文件内容如下:

[Unit] Description=Alert Manager Wants=network-online.target After=network-online.target [Service] Type=simple User={{ user_name }} Group={{ user_name }} ExecStart={{ home_dir }}/alertmanager/alertmanager \ --config.file=/etc/alertmanager/alertmanager.yml \ --storage.path={{ home_dir }}/data/alertmanager Restart=always [Install] WantedBy=multi-user.target

各组件的部署角色已经创建完成,接下来在 Playbook 中,使用这些角色。

1.4.5 创建部署 Playbook

创建 ~/playbooks/deploy_prometheus/ 目录,并切换至该目录:

mkdir ~/playbooks/deploy_prometheus/ cd ~/playbooks/deploy_prometheus/

定义变量,创建 vars/ 目录,在 vars/ 目录下创建 variable.yaml 文件,内容如下:

--- # defaults file for download_prometheus package_url: - https://dl.grafana.com/enterprise/release/grafana-enterprise-9.3.1.linux-arm64.tar.gz - https://github.com/prometheus/alertmanager/releases/download/v0.24.0/alertmanager-0.24.0.linux-arm64.tar.gz - https://github.com/prometheus/node_exporter/releases/download/v1.5.0/node_exporter-1.5.0.linux-arm64.tar.gz - https://github.com/prometheus/prometheus/releases/download/v2.40.5/prometheus-2.40.5.linux-arm64.tar.gz unarchive_path: - ~/software/monitor_tools/grafana - ~/software/monitor_tools/alertmanager - ~/software/monitor_tools/node_exporter - ~/software/monitor_tools/prometheus user_name: monitor home_dir: /opt/monitor grafana_dashboards_path: /var/lib/grafana/dashboard alertmanager_host: localhost alertmanager_port: 9093 prometheus_host: localhost prometheus_port: 9090 node_exporter_port: 9100

创建 deploy_all.yaml Playbook 文件,内容如下:

--- - name: deploy prometheus service play hosts: prometheus become: true gather_facts: false vars_files: - variable.yaml roles: - role: deploy_prometheus tags: deploy_prometheus - name: deploy node exporter service play hosts: all become: true gather_facts: false vars_files: - variable.yaml roles: - role: deploy_node_exporter - tags: deploy_node_exporter - name: deploy grafana service play hosts: prometheus become: true gather_facts: false vars_files: - variable.yaml roles: - role: deploy_grafana tags: deploy_grafana - name: deploy alertmanager service play hosts: prometheus become: true gather_facts: false vars_files: - variable.yaml roles: - role: deploy_alertmanager tags: deploy_alertmanager ...

在 deploy_all.yaml Playbook 文件中,包含了四个 Play,每个 Play 分别引用了不同的 Role。

1.4.6 部署组件

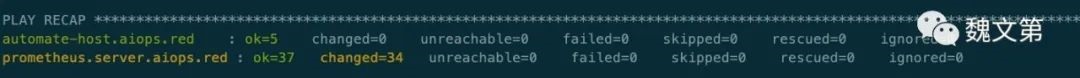

执行 deploy_all.yaml Playbook 文件,完成 Prometheus 组件的安装与配置:

ansible-playbook deploy_all.yaml

图 1.10 部署 Prometheus 组件

图 1.10 部署 Prometheus 组件

部署操作已顺利完成,接下来登录浏览器,访问 Grafana 页面。

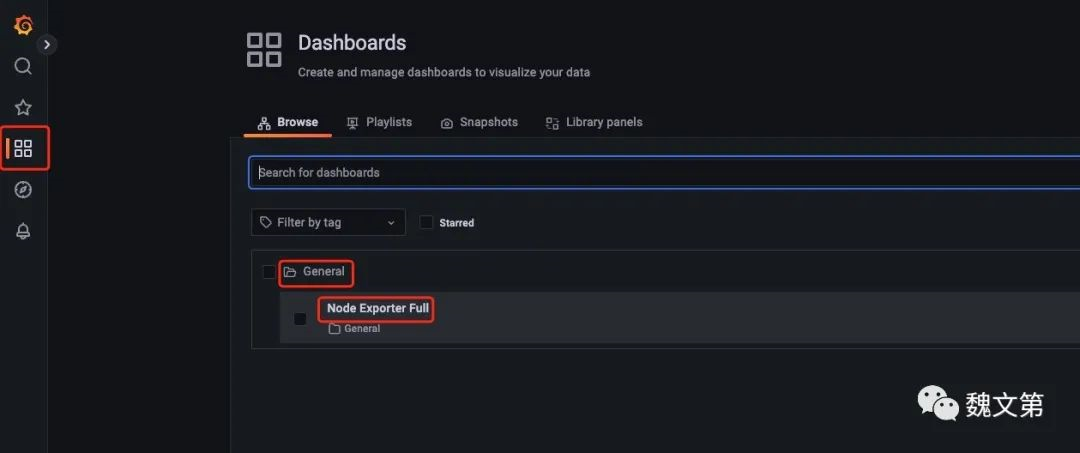

1.5 查看监控

在浏览器中访问 http://10.211.55.30:3000,使用 admin 用户名和密码登录,在输入新密码后,可以进入 Grafana。点击下图红框中的按钮,查看监控页面:

图 1.11 Dashboard

图 1.11 Dashboard

进入节点监控页面:

图 1.12 节点监控页面

图 1.12 节点监控页面

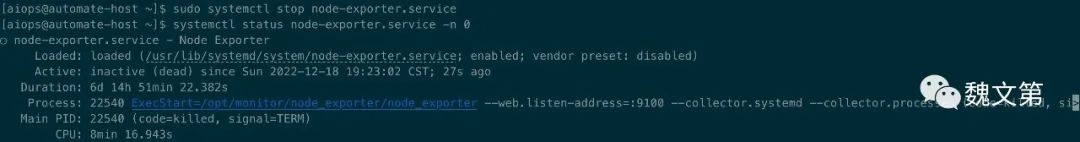

1.6 触发报警

停止 Ansible 控制节点上的 node exporter 服务,伪造节点异常:

sudo systemctl stop node-exporter.service

图 1.13 停止 node-exporter.service

图 1.13 停止 node-exporter.service

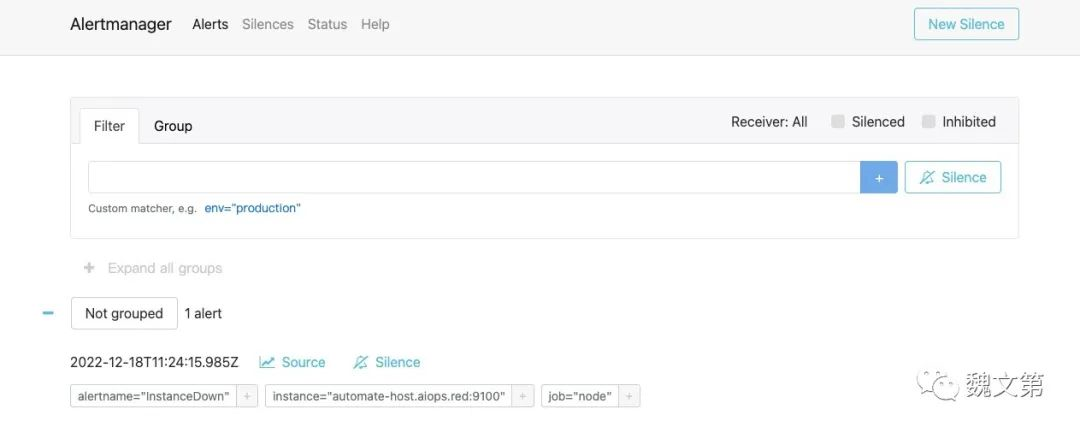

查看 Alertmanager 页面 http://10.211.55.30:9093/#/alerts:

图 1.14 Alerts

图 1.14 Alerts

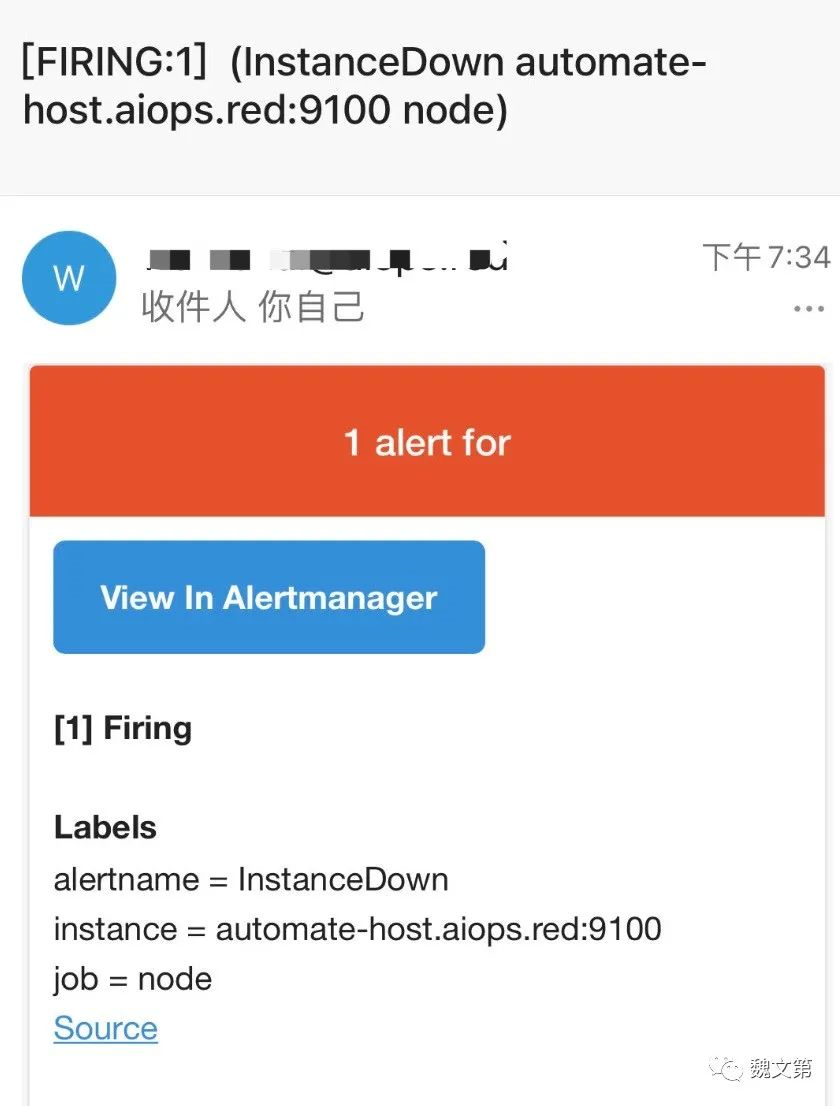

如果邮箱报警配置正确,现在收件箱已经有了报警邮件:

图 1.15 邮件报警

图 1.15 邮件报警

1.7 总结

Prometheus 是云原生环境主流监控系统。本文讨论了在 Rocky Linux 9 上自动化部署、配置 Prometheus 组件的过程,实现了对节点的监控以及故障报警。本文讨论的操作同样适用于其他基于 RPM 的 Linux 发行版。

本文作者:Gustav

本文链接:

版权声明:本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!